Key Takeaways

- Gradient descent is the core training algorithm for every neural network — choosing the right optimizer (Adam vs SGD) and learning rate is the single biggest factor in model training success

- The Adam optimizer converges faster than vanilla SGD on most deep learning tasks due to adaptive per-parameter learning rates — use it as your default unless you have a reason not to

- Mini-batch sizes of 32-256 are the industry standard — smaller batches generalize better but train slower; larger batches train faster but may converge to sharp minima with worse test accuracy

- Learning rate scheduling (reducing LR as training progresses) consistently improves final model accuracy vs. fixed learning rates — cosine annealing and ReduceLROnPlateau are practical starting points

- Vanishing gradients are the most common failure mode in deep networks — use ReLU activations, batch normalization, and residual connections to prevent them

Start With Adam, Then Tune

Gradient descent is the engine that makes neural networks learn. Without it, deep learning models would be random weight combinations with no way to improve. Understanding how it works — and how to tune it — is the difference between a model that trains in days and one that never converges.

This guide explains gradient descent from first principles, covers every major optimizer variant, and gives you a practical framework for applying it to real business AI projects.

What Is Gradient Descent in Deep Learning?

Gradient descent is an iterative optimization algorithm that minimizes a neural network’s loss function by repeatedly adjusting model weights in the direction of steepest descent. It computes the gradient (slope) of the error at the current weight values, then moves weights opposite to that gradient. Each iteration brings the model’s predictions closer to the training labels.

The term “gradient learning” captures the core idea: every parameter in a deep learning model learns by following gradients. A model’s entire knowledge — how to recognize an image, detect fraud, or forecast demand — is encoded in billions of weights, each shaped by thousands of gradient updates. For a broader view of the learning process — including loss function selection, the training-validation-test cycle, and why data quality sets the ceiling for any model — see our guide on why machines learn.

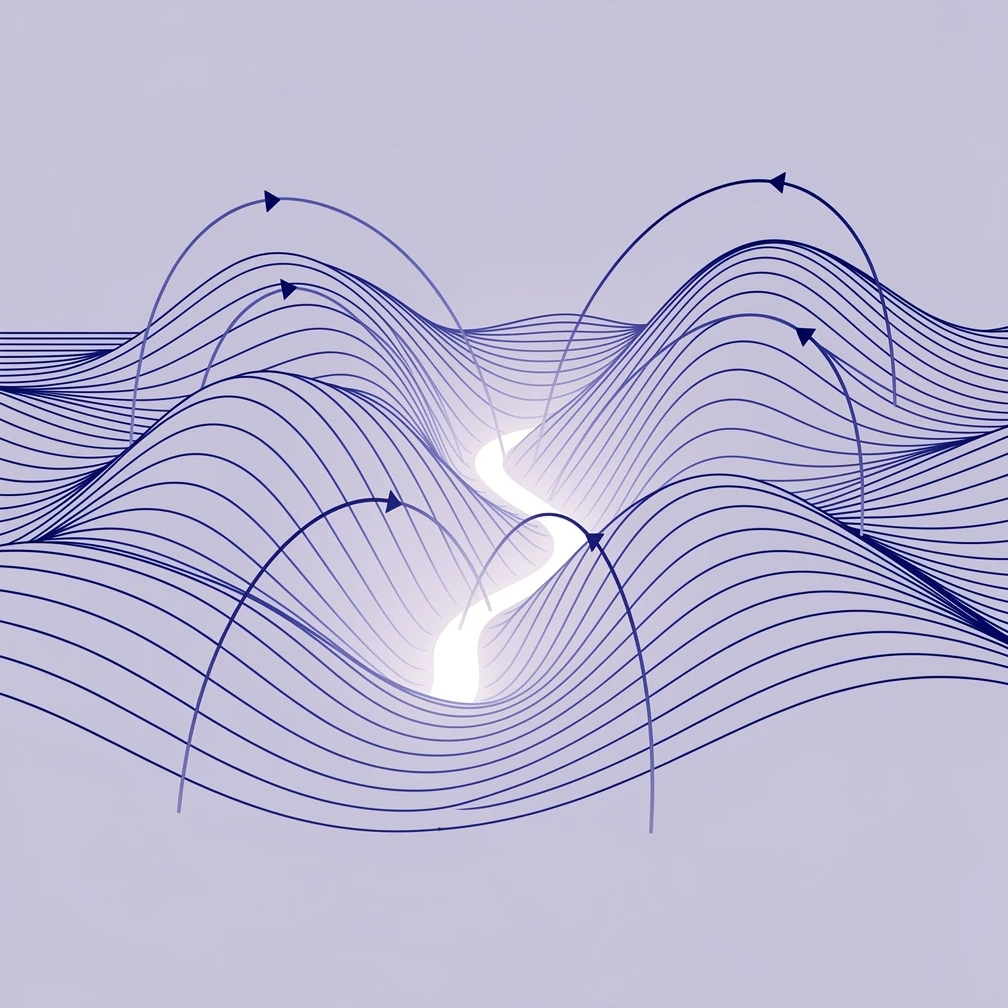

The Loss Surface and Why It Matters

Imagine a mountainous terrain in very high dimensions. Every point on that landscape represents a different set of model weights, and the elevation at each point is the model’s prediction error (loss). Gradient descent is the process of finding a valley — a region of low loss.

In practice, the loss surface of a deep network is not a simple bowl. It contains:

- Saddle points: flat regions where the gradient is near zero but the location isn’t a minimum

- Local minima: valleys that aren’t the global optimal solution

- Sharp minima: narrow valleys that generalize poorly to new data

- Flat minima: wide valleys that generalize well

The design of your optimizer, learning rate, and batch size all influence which type of minimum your model finds. This has direct consequences for how your AI system performs in production.

The Three Components of Every Gradient Update

Every gradient descent step involves three calculations:

- Forward pass: Run inputs through the network to get predictions

- Loss computation: Compare predictions to ground truth using the loss function (cross-entropy for classification, MSE for regression)

- Backward pass (backpropagation): Compute gradients of the loss with respect to every weight in the network

After backpropagation computes the gradients using the chain rule, the optimizer applies the weight update: new_weight = old_weight - learning_rate × gradient. This loop repeats for thousands of iterations. For a complete explanation of how this fits into the full neural network architecture, see how deep learning works end-to-end.

How the Gradient Descent Algorithm Works Step by Step

Gradient descent trains a neural network by repeatedly computing prediction error, calculating how much each weight contributed to that error, and adjusting weights to reduce future errors. The key hyperparameter is the learning rate, which controls how large each weight adjustment is. Too high and training diverges; too low and training is impractically slow.

The Learning Rate: Your Most Critical Hyperparameter

The learning rate (often written as α or lr) determines the step size at each iteration. According to the PyTorch documentation, SGD’s default learning rate is 0.01, while Adam defaults to 0.001. These are reasonable starting points, but they’re rarely optimal for a specific task.

A learning rate that is too high causes the optimizer to overshoot minima — the loss oscillates or diverges entirely. A learning rate that is too low causes training to proceed extremely slowly, often getting stuck in suboptimal regions.

Three practical approaches to setting the learning rate:

- Learning rate finder: Increase the learning rate exponentially over a few hundred batches and plot loss. The optimal LR sits just before the loss begins to increase steeply (popularized by Leslie Smith in the 2017 cyclical learning rates paper)

- Learning rate schedules: Start higher, then systematically reduce. Cosine annealing and ReduceLROnPlateau (reduce when validation loss plateaus) are the most practical in PyTorch and TensorFlow

- Warm-up then decay: Start with a very small LR, increase linearly for the first few epochs, then decay — this pattern is standard in large language model training

Backpropagation: How Gradients Flow Through the Network

Backpropagation is the algorithm that efficiently computes gradients for every weight in a deep network by applying the chain rule of calculus from output back to input. Without it, computing gradients for a network with millions of parameters would be computationally infeasible.

The chain rule allows gradient computation to be factored layer by layer: the gradient at each layer depends on gradients flowing back from the layers above it. This is why the problem of vanishing gradients — where gradients become exponentially small as they propagate backward through many layers — was a major obstacle to training deep networks before modern techniques like ReLU activations and batch normalization.

For a hands-on implementation of backpropagation in a working neural network, see how to build a neural network from scratch.

Common mistake: Setting the same learning rate for all layers. In transfer learning scenarios, pretrained layers need much smaller LR updates than newly initialized layers — use layer-specific learning rates to avoid destroying pretrained representations.

Types of Gradient Descent: Batch, Stochastic, and Mini-Batch

The three main variants of gradient descent differ in how much training data they use to compute each gradient update. Mini-batch gradient descent is the universal standard for deep learning: it processes 32-256 samples per update, providing stable gradient estimates while remaining computationally efficient on modern GPU hardware.

Batch Gradient Descent

Batch gradient descent computes the gradient over the entire training dataset before making a single weight update. This produces a precise gradient estimate but is impractical for large datasets — you’d process millions of examples just to make one parameter update.

When it’s used: Rarely in deep learning. It’s mathematically clean and useful for very small datasets (a few hundred samples) where the entire dataset fits in memory.

Stochastic Gradient Descent (SGD)

Stochastic gradient descent (SGD) updates weights after every single training sample. Updates are fast but noisy — each sample produces a gradient that may point in a slightly wrong direction. This noise can actually help escape shallow local minima, which is why pure SGD occasionally produces better-generalizing models than smoother optimizers.

When it’s used: Online learning systems that process data streams in real time. Also still popular in NLP research for some training regimes.

Mini-Batch Gradient Descent (Industry Standard)

Mini-batch gradient descent processes a small batch of samples (the batch size) before updating weights. It’s the standard for all modern deep learning frameworks. According to the Adam optimizer paper by Kingma and Ba (2014), mini-batch processing is assumed as the baseline for all gradient-based deep learning optimization.

Typical batch sizes:

- 32: Default in many frameworks; good generalization, moderate speed

- 64-128: Faster training, marginally less generalization; common in computer vision

- 256-512: Used for large-scale training with data parallelism across multiple GPUs

Research published by Keskar et al. at ICLR 2017 demonstrated that large-batch training tends to converge to sharp minima with worse test accuracy, while small-batch training finds flatter minima that generalize better.

Ready to implement AI in your business? GrowthGear’s team has helped 50+ startups integrate deep learning solutions into their products and workflows. Book a Free Strategy Session to discuss your AI model training challenges.

Modern Gradient Descent Optimizers

Beyond the basic batch/stochastic/mini-batch distinction, the optimizer algorithm itself determines how gradients are applied to weight updates. The Adam optimizer is the default for most deep learning tasks due to its adaptive learning rates and momentum terms — it converges faster than vanilla SGD on the majority of architectures without requiring extensive learning rate tuning.

SGD with Momentum

Standard SGD accumulates a weighted moving average of past gradients (momentum) to smooth out noisy updates and accelerate movement through flat regions of the loss surface. The momentum parameter (typically 0.9) controls how much past gradient information is retained. This adaptive smoothing concept also appears outside ML entirely — adaptive moving averages in time series analysis use a similar efficiency ratio to adjust smoothing speed without any gradient computation.

Best for: Image classification with ResNets and other standard convolutional architectures, where SGD with momentum + cosine LR schedule often achieves slightly better final accuracy than Adam after full training.

Adam (Adaptive Moment Estimation)

Adam combines two ideas: momentum (first moment) and adaptive learning rates based on the squared gradient (second moment). The adaptive component means each parameter gets its own effective learning rate, scaled by how frequently it has been updated. Parameters that receive sparse gradients (common in NLP tasks with large vocabularies) get larger updates; frequently updated parameters get smaller updates.

Default hyperparameters from the original Adam paper: β1=0.9, β2=0.999, ε=1e-8, lr=0.001. These defaults work well across a wide range of tasks, which is why Adam became the go-to optimizer for deep learning.

Best for: Transformer architectures, RNNs/LSTMs, most NLP tasks, and any scenario where you want fast convergence without extensive hyperparameter tuning. Transformers like BERT and GPT are almost exclusively trained with Adam or its variants.

For more on how transformers use gradient descent during training, see what is a transformer in machine learning.

RMSprop

RMSprop (Root Mean Square Propagation) adapts the learning rate per parameter using a moving average of squared gradients, similar to Adam’s second moment — but without the first moment (momentum) component. It was developed by Geoffrey Hinton and is effective for recurrent neural networks where gradients vary significantly over time.

Best for: RNNs, LSTMs, and reinforcement learning scenarios. Less common than Adam for new projects.

AdaGrad

AdaGrad accumulates all past squared gradients and divides the learning rate by their square root. This causes learning rates to decay monotonically — beneficial for sparse features early in training but problematic for long training runs (the learning rate eventually drops so low that learning stops).

Best for: Sparse data problems and NLP tasks with rare words/features. Largely replaced by Adam for most use cases.

Optimizer Comparison

| Optimizer | Adaptive LR | Momentum | Convergence Speed | Best Use Case |

|---|---|---|---|---|

| SGD | No | Optional | Slow | CV fine-tuning, final polish |

| SGD + Momentum | No | Yes | Moderate | Image classification |

| Adam | Yes | Yes | Fast | Default for most tasks |

| AdamW | Yes | Yes | Fast | Transformers (weight decay fix) |

| RMSprop | Yes | No | Moderate | RNNs, RL environments |

| AdaGrad | Yes (decaying) | No | Fast early, stalls | Sparse features |

AdamW is a variant of Adam that decouples weight decay from the gradient update — it’s now the standard for training transformer models according to TensorFlow’s official documentation.

Applying Gradient Descent in Your Business AI Projects

For business AI projects, the practical application of gradient descent comes down to five decisions: optimizer choice, learning rate, batch size, learning rate schedule, and stopping criteria. Defaulting to Adam with a cosine LR schedule and batch size 32-64 handles 80% of use cases. The remaining 20% requires profiling your specific model, data, and task.

Practical Optimizer Setup for Common Business Use Cases

Fraud detection / tabular classification: Adam (lr=0.001), batch size 64, ReduceLROnPlateau with patience=5. See how to train machine learning models for the full training pipeline.

NLP / text classification: AdamW (lr=2e-5 when fine-tuning pretrained models like BERT), batch size 16-32, linear warmup for first 10% of training steps.

Computer vision: SGD with momentum (0.9), batch size 256, cosine LR schedule. Many production image models (ResNet, EfficientNet) use this combination because it achieves slightly better final accuracy than Adam after sufficient training epochs.

Time series / demand forecasting: Adam or RMSprop, batch size 32-64, learning rate 0.001, dropout regularization to prevent overfitting on short sequences. See our full guide to deep learning for time series forecasting for model-specific optimizer recommendations.

Learning Rate Scheduling

A fixed learning rate is almost never optimal across the full training run. Learning rate schedules reduce LR as training progresses, allowing the optimizer to fine-tune weights without oscillating around a minimum.

Three effective schedules:

- Cosine annealing: Reduces LR following a cosine curve from initial to near-zero. Used in ImageNet training and most computer vision benchmarks

- Step decay: Multiply LR by 0.1 every N epochs. Simple, effective, widely used in production

- ReduceLROnPlateau: Monitor validation loss; when it stops improving for

patienceepochs, multiply LR byfactor(typically 0.1-0.5). Most practical for business projects where optimal schedule isn’t known in advance

Teams using AI tools for digital marketing optimization and predictive analytics benefit from models trained with proper LR scheduling — the accuracy difference between a fixed LR and a well-scheduled LR is typically 2-8% on real business datasets.

Gradient Clipping for Unstable Training

If you’re training RNNs or very deep networks and observing loss spikes or NaN values, gradient clipping is the fix. It caps the gradient norm at a threshold (typically 1.0) before applying the weight update, preventing any single batch from causing catastrophically large updates.

In PyTorch: torch.nn.utils.clip_grad_norm_(model.parameters(), max_norm=1.0) — call this before optimizer.step().

When planning your AI development roadmap, working with a team that understands these technical nuances is critical to avoiding costly training failures. A structured business development strategy for AI adoption includes allocating time for model training iteration, not just deployment.

Common Gradient Descent Problems and How to Fix Them

Every deep learning practitioner encounters training failures that look catastrophic but have straightforward causes. The most common issues — diverging loss, vanishing gradients, and validation plateaus — each produce distinct symptoms and respond to specific fixes. Diagnosing the problem correctly saves days of trial-and-error debugging and prevents restarting expensive training runs from scratch.

Loss Diverges (Goes to Infinity or NaN)

Symptom: Loss increases rapidly or becomes NaN after a few batches.

Cause: Learning rate is too high, causing weight updates to overshoot.

Fix: Reduce LR by 10x. If the problem persists, check for:

- Poorly normalized input data (normalize to zero mean, unit variance — see how to determine neural network input layers for a full normalization guide covering StandardScaler, MinMaxScaler, and data leakage prevention)

- Exploding gradients (add gradient clipping)

- Bad initialization (use PyTorch’s default Xavier/He initialization)

Training Loss Decreases but Validation Loss Plateaus (Overfitting)

Symptom: Training accuracy climbs while validation accuracy stalls or drops.

Cause: The model has memorized training data rather than learning generalizable patterns.

Fix:

- Reduce model capacity (fewer layers or units)

- Add dropout (

nn.Dropout(p=0.3)is a standard starting point) - Add L2 weight decay (

weight_decay=1e-4in Adam) - Increase training data or use data augmentation

Training Stalls Early (Vanishing Gradients)

Symptom: Loss stops improving after a few epochs, even with a reasonable learning rate. Earlier layers in the network have near-zero gradient magnitudes.

Cause: Gradients become exponentially small as they propagate backward through many layers, particularly with sigmoid or tanh activations.

Fix:

- Switch to ReLU or its variants (Leaky ReLU, GELU) in hidden layers

- Add batch normalization between layers

- Use residual connections (skip connections) if depth exceeds 10-15 layers

- Review types of neural networks to choose an architecture with built-in residual connections

Model Trains Slowly Without Improving

Symptom: Loss decreases very slowly — training would require weeks to reach acceptable accuracy.

Cause: Learning rate is too low, or the model is stuck in a flat saddle point region.

Fix:

- Increase LR by 5-10x (carefully, watching for divergence)

- Switch from SGD to Adam — adaptive learning rates naturally escape saddle points faster

- Check that your data pipeline isn’t the bottleneck (GPU should be >90% utilized during training)

Take the Next Step

Gradient descent tuning separates functional AI models from production-ready ones. Whether you’re optimizing a fraud detection model, a recommendation engine, or a forecasting system, getting the optimizer, learning rate, and batch strategy right determines whether your project delivers measurable ROI.

GrowthGear’s team has guided 50+ startups and growth-stage companies through deep learning implementation — from selecting the right architecture to diagnosing training failures in production. We help you avoid the trial-and-error phase that typically costs teams 3-6 months.

Book a Free Strategy Session →

Gradient Descent Optimizer Summary

| Aspect | Recommendation | Notes |

|---|---|---|

| Default optimizer | Adam (lr=0.001) | Works across 80%+ of tasks without tuning |

| Transformer/NLP | AdamW (lr=2e-5) | Better weight decay handling |

| Computer vision (long training) | SGD + momentum | Marginally better final accuracy |

| Batch size | 32-64 | Balances speed and generalization |

| LR schedule | Cosine or ReduceLROnPlateau | Always use a schedule vs. fixed LR |

| Gradient clipping | max_norm=1.0 | Use for RNNs and very deep networks |

| Vanishing gradients fix | ReLU + batch norm | Standard for networks > 5 layers |

| Exploding gradients fix | Gradient clipping | Apply before optimizer.step() |

Sources & References

- Kingma, D.P. & Ba, J. — “Adam: A Method for Stochastic Optimization” — Original Adam paper introducing adaptive moment estimation with default hyperparameters β1=0.9, β2=0.999 (2014)

- Ruder, S. — “An Overview of Gradient Descent Optimization Algorithms” — Comprehensive comparison of SGD variants, momentum, AdaGrad, RMSprop, and Adam with practical guidance (2016)

- PyTorch Optimizer Documentation — Official documentation for all PyTorch optimizer implementations including default hyperparameters

- TensorFlow Keras Optimizers — Official TensorFlow/Keras optimizer API including AdamW implementation notes

Frequently Asked Questions

Gradient descent is the optimization algorithm that adjusts a neural network's weights to minimize prediction error. It calculates the gradient of the loss function and updates weights in the opposite direction, iterating until the model reaches minimum error.

SGD updates weights with a fixed learning rate, requiring manual tuning. Adam adapts the learning rate per parameter using momentum estimates, converging faster on most tasks. Adam is the default choice; SGD with momentum works better for some computer vision tasks.

Start with 0.001 for Adam and 0.01 for SGD. Use a learning rate finder (increase LR exponentially until loss spikes) to identify the optimal range. Learning rate schedules that decay over training consistently outperform fixed rates.

Common causes: learning rate too high (loss oscillates or diverges), learning rate too low (training is extremely slow), vanishing gradients (deep networks stop learning), or saddle points in the loss landscape. Each has specific diagnostic signs and fixes.

Mini-batch gradient descent updates weights after processing a small subset of training data (typically 32-256 samples). It balances the stability of batch gradient descent with the speed of stochastic gradient descent, and is the standard approach for training deep learning models.

Yes. Gradient descent (or a variant) is the universal training mechanism for neural networks. The difference is which optimizer variant you use: SGD, Adam, RMSprop, AdaGrad, or others — all are gradient descent algorithms with different update strategies.

Training time ranges from minutes (small classification models) to weeks (large language models). For most business use cases — fraud detection, churn prediction, demand forecasting — expect 2-24 hours on GPU hardware with a well-tuned optimizer.