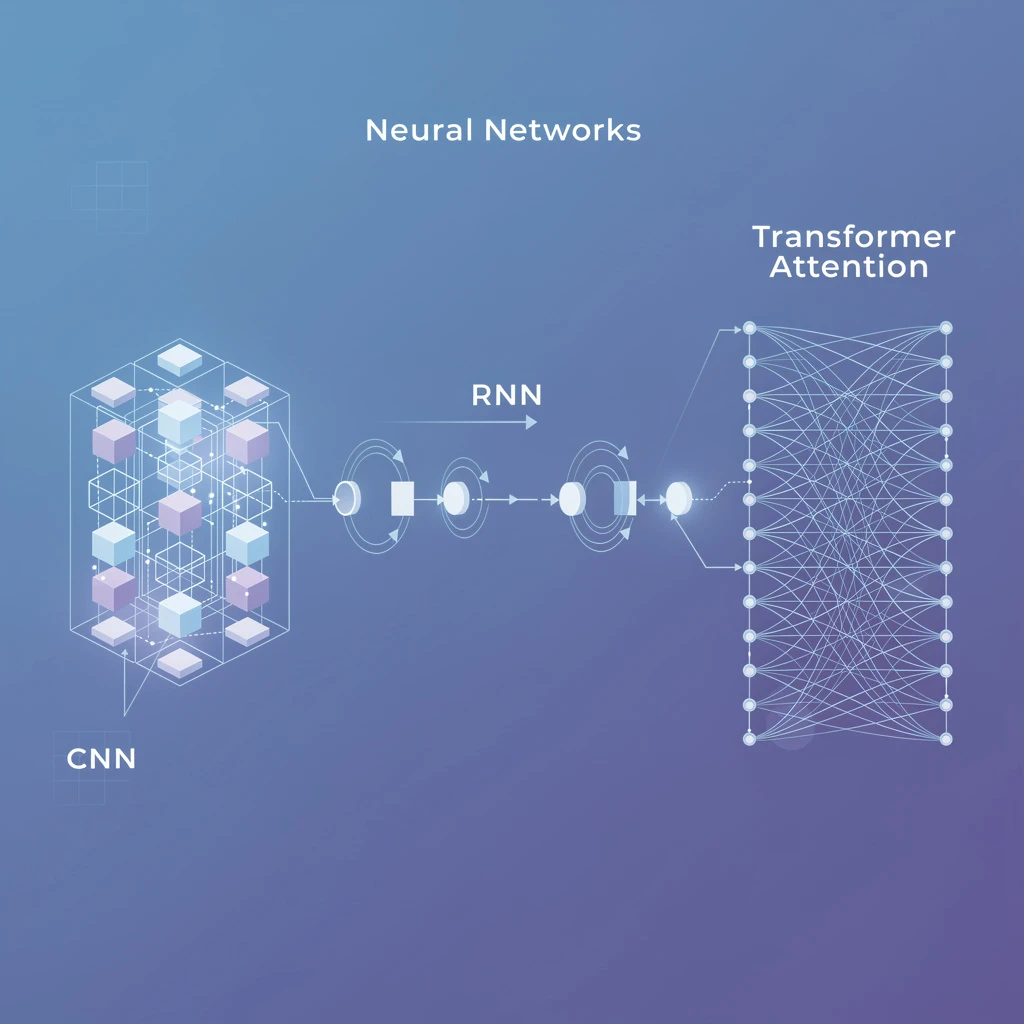

Neural networks are not one-size-fits-all. A convolutional neural network that achieves 99% accuracy on image classification will perform poorly on text generation. An LSTM that excels at time-series forecasting is the wrong tool for detecting objects in video frames.

Choosing the right architecture is one of the most consequential technical decisions in any AI project — and most business leaders make it without a framework. This guide gives you that framework: a clear breakdown of every major neural network type, what it does well, where it fails, and which business problems each architecture actually solves. Once you’ve chosen an architecture, our complete guide to building a neural network covers the full training, evaluation, and deployment process. If you’re new to the field, first read how deep learning works for a grounding in the training mechanics — backpropagation, gradient descent, and loss functions — that all these architectures share.

Feedforward and Convolutional Neural Networks

These two architectures form the foundation of most practical deep learning. If you are starting an AI project involving structured data or images, you will encounter one of these first.

Feedforward Networks (Multilayer Perceptrons)

A feedforward network, also called a multilayer perceptron (MLP), is the simplest neural network architecture. Data flows in one direction — from input through one or more hidden layers to an output — with no loops or cycles.

Each neuron in a layer receives inputs from all neurons in the previous layer, applies a weighted sum, adds a bias, then passes the result through an activation function (ReLU, sigmoid, or tanh). The network learns by adjusting weights through backpropagation to minimize prediction error.

Where MLPs excel:

- Tabular data classification (customer churn, fraud detection, credit scoring)

- Regression tasks (price prediction, demand forecasting)

- Feature embeddings as components within larger architectures

Where MLPs struggle:

- Images: They flatten 2D spatial structure, losing local pattern information

- Sequences: No memory mechanism means no ability to capture temporal dependencies

- High-dimensional inputs: Parameter count explodes with input size

A 28×28 image flattened to an MLP input creates 784 input neurons. A 1920×1080 image creates 2,073,600 — a computationally impractical starting point that ignores all spatial relationships. This is the problem CNNs solve. For a detailed breakdown of how to configure the right input layer for any architecture, see the guide on how to determine neural network input layers.

Convolutional Neural Networks (CNNs)

Convolutional Neural Networks exploit spatial structure through learned filters. Instead of connecting every input pixel to every hidden neuron, a convolutional layer slides a small filter (typically 3×3 or 5×5) across the input, detecting local patterns regardless of position. For a deeper definitional walkthrough of what a convolutional neural network is — including kernel mechanics, pooling, and tasks beyond classification — see the dedicated CNN explainer.

Three properties make CNNs effective for visual data:

- Local connectivity: Each neuron only looks at a small region, matching how low-level visual features (edges, textures) are local

- Weight sharing: The same filter is applied across the entire input, dramatically reducing parameter count

- Hierarchical features: Early layers detect edges, middle layers detect shapes, deep layers detect complex objects

| CNN Component | Function |

|---|---|

| Convolutional layer | Detect local patterns using learnable filters |

| Pooling layer | Downsample spatial dimensions, add position invariance |

| Batch normalization | Stabilize training, enable higher learning rates |

| Fully connected layer | Classify based on learned features |

| Dropout | Regularize to prevent overfitting |

Where CNNs excel:

- Image classification (ResNet achieves 96.7% on ImageNet top-5)

- Object detection (YOLO, Faster R-CNN)

- Medical imaging (radiology, pathology, dermatology AI)

- Video analysis for surveillance and manufacturing QA

- Any domain with grid-structured, spatially-correlated data

Where CNNs struggle:

- Sequential data without clear spatial structure

- Long-range dependencies across large images (transformers outperform here at scale)

- Small datasets without transfer learning

CNNs form the backbone of nearly every visual AI system deployed in production. If your business involves computer vision applications — quality control, retail analytics, medical imaging — CNNs are almost certainly part of the stack. For a detailed implementation guide covering CNN architectures (ResNet, EfficientNet, MobileNet), training data requirements, and deployment options, see our CNN image classification guide.

Recurrent and Sequence-Based Networks

When data has order — text, audio, financial time series, sensor readings — the network needs a memory mechanism. The architectures in this section are purpose-built for sequential data.

Recurrent Neural Networks (RNNs)

A Recurrent Neural Network processes sequences by maintaining a hidden state: a compressed representation of everything the network has seen so far. At each timestep, it combines the current input with the previous hidden state to produce a new hidden state and an output.

The recurrent connection creates a feedback loop that, in theory, gives RNNs indefinite memory. The 2024 language model landscape has largely moved beyond vanilla RNNs, but they remain relevant for:

- Simple sequence labeling (POS tagging, named entity recognition at the edge)

- Time-series classification on constrained hardware

- Educational and prototyping contexts

The core limitation: vanishing gradients. When training with backpropagation through time (BPTT), gradients can shrink exponentially as they propagate back through many timesteps. An RNN trained on 500-token sequences “forgets” what happened at token 50. This is the problem LSTMs and GRUs solve.

Long Short-Term Memory Networks (LSTMs)

LSTMs (introduced by Hochreiter & Schmidhuber in 1997) add gating mechanisms to the recurrent structure, allowing the network to selectively remember, forget, and output information across long sequences.

Three gates control information flow:

- Forget gate: Decides what fraction of the previous cell state to discard

- Input gate: Decides what new information to write into the cell state

- Output gate: Decides what part of the cell state to expose as the hidden state

This architecture can maintain relevant information across hundreds or thousands of timesteps — a capability vanilla RNNs cannot achieve reliably.

Where LSTMs excel:

- Time-series forecasting (energy demand, stock prices, sensor data)

- Streaming data with strict latency constraints

- On-device inference where model size is a hard constraint

- Anomaly detection in sequential operational data

Where LSTMs struggle:

- Long documents where transformers have a 15–40% accuracy advantage

- Parallelization: LSTM processing is inherently sequential, making GPU utilization poor

- Training speed compared to transformers on equivalent tasks

A practical note for business applications: LSTMs running locally on edge hardware (IoT sensors, embedded systems) remain relevant precisely because they are small and don’t require cloud API calls. For server-side NLP at scale, transformers have superseded them.

Ready to deploy neural networks in your business? GrowthGear has helped 50+ startups implement the right deep learning architectures for their specific use cases — from computer vision QA systems to LSTM-powered demand forecasting. Book a Free Strategy Session to identify which network type fits your problem.

Transformers

Transformers, introduced in “Attention Is All You Need” (Vaswani et al., 2017), replaced sequential processing with parallel self-attention. Rather than processing one token at a time and passing a hidden state, transformers compute relationships between all tokens simultaneously.

For a detailed technical breakdown of how transformers work, see our comprehensive guide to what is a transformer in machine learning. If you want to understand how ChatGPT specifically fits into this taxonomy — and why its decoder-only design matters for business applications — read our explainer on whether ChatGPT is technically a neural network. For a side-by-side comparison of decoder-only, encoder-only, and encoder-decoder LLM architectures along with guidance on matching each to your use case, see do LLMs use neural networks: the architecture explained.

Where transformers excel:

- All natural language tasks: generation, classification, summarization, translation

- Code generation (GitHub Copilot reports 55% faster task completion)

- Multimodal tasks (text + images, text + audio)

- Any task where long-range dependencies matter

Business impact of transformers in 2026:

- LLM APIs cost $0.002–$0.015 per 1K tokens for frontier models

- 79% of enterprise AI deployments now include a transformer component (McKinsey, 2025)

- Average B2B team using transformer-powered tools saves 5–8 hours/week per knowledge worker

The transformer’s dominance has reshaped how businesses think about natural language processing — from custom model development to API-first workflows.

Generative and Specialized Architectures

Beyond the core supervised-learning architectures, several specialized network types address generation, compression, and graph-structured data.

Generative Adversarial Networks (GANs)

GANs consist of two neural networks in adversarial competition:

- Generator: Takes random noise as input, produces synthetic data (images, audio, video)

- Discriminator: Distinguishes between real and generated data

The generator improves to fool the discriminator; the discriminator improves to detect the generator. This adversarial loop produces progressively more realistic synthetic output. Introduced by Ian Goodfellow et al. in 2014, GANs achieved photorealistic image synthesis that was previously impossible.

Business applications of GANs:

- Synthetic training data: Generate labeled examples for rare events (fraud, defects) when real examples are scarce

- Product design: Rapid visualization of product variants without physical prototyping

- Media production: AI-assisted video production, background generation, face anonymization

- Drug discovery: Generate molecular structures with target properties

GAN limitations:

- Training instability: Mode collapse (generator produces limited varieties), oscillating losses, and training divergence are common without careful hyperparameter tuning

- Evaluation difficulty: Measuring output quality requires human judgment or specialized metrics (FID, IS scores)

- Superseded for many tasks: Diffusion models (DALL-E 3, Stable Diffusion, FLUX) now produce higher-quality images than GANs for most applications

Autoencoders

An autoencoder compresses input data into a lower-dimensional latent representation (encoding), then reconstructs the original from that representation (decoding). The network is trained to minimize reconstruction error, forcing it to learn efficient data representations.

| Variant | Use Case |

|---|---|

| Vanilla autoencoder | Dimensionality reduction, feature learning |

| Variational autoencoder (VAE) | Generative modeling, data augmentation |

| Denoising autoencoder | Signal cleanup, noise removal |

| Sparse autoencoder | Interpretability research, feature extraction |

Where autoencoders excel:

- Anomaly detection: Reconstruct normal operational data well; anomalies produce high reconstruction error, flagging outliers without labeled examples

- Data compression: Domain-specific compression that outperforms generic algorithms on structured data

- Pre-training: Initialize representations before supervised fine-tuning with limited labels

For anomaly detection in manufacturing sensor data, autoencoders trained on “normal” operation patterns routinely achieve 90–95% precision at detecting equipment faults before failure.

Graph Neural Networks (GNNs)

Most neural networks assume data is arranged in a grid (images) or a sequence (text). Graph Neural Networks operate on graph-structured data — nodes connected by edges — where relationships between entities matter as much as the entities themselves.

GNNs work by aggregating information from a node’s neighbors iteratively, building richer representations that encode local and global graph structure.

Where GNNs excel:

- Fraud detection: Model transaction networks where fraud patterns emerge from relationships between accounts, merchants, and behaviors — not individual transactions in isolation

- Recommendation systems: User-item interaction graphs; Pinterest’s PinSage GNN improved recommendation quality by 30% at billion-scale

- Drug discovery: Molecular graphs (atoms as nodes, bonds as edges) for predicting compound properties

- Supply chain optimization: Model interdependencies between suppliers, logistics nodes, and demand signals

GNNs are particularly powerful when the structure of relationships is itself informative — something flat tabular models fundamentally cannot capture. For a complete business-focused breakdown of how GNNs work and where they deliver ROI, see our deep graph learning guide.

How to Choose the Right Neural Network Architecture

The architecture selection decision has a 3-step framework that cuts through the complexity:

Step 1: Characterize Your Data

| Data type | Starting architecture |

|---|---|

| Images, video frames | CNN or Vision Transformer (ViT) |

| Text, language | Transformer (use an API first) |

| Time series, audio | LSTM / Transformer depending on length |

| Tabular (structured) | MLP or gradient boosted trees (often beats NNs) |

| Graph-structured | GNN |

| Unlabeled data (anomaly) | Autoencoder |

| Need to generate new examples | GAN or diffusion model |

Step 2: Assess Your Constraints

Architecture selection is always a tradeoff between capability and cost:

- Accuracy vs. latency: Transformers are most accurate; LSTMs and CNNs run faster on edge hardware

- Data volume: Transformers require large datasets or pre-training. CNNs with transfer learning work well with as few as 1,000 labeled examples.

- Infrastructure: If you lack GPU infrastructure, default to API-based transformer access rather than self-hosted models

- Interpretability: Autoencoders and simpler MLPs are easier to explain to regulators. Transformers with attention weights offer partial interpretability.

GrowthGear’s standard recommendation for businesses starting their AI journey: default to transformer APIs (GPT-4o, Claude, Gemini) for language tasks, pre-trained CNNs with transfer learning for vision tasks, and avoid training from scratch until you have validated the use case at scale. This approach mirrors how AI implementation in business is done effectively — start with what works, then optimize.

Step 3: Run a Baseline Experiment

Before committing to a complex architecture:

- Build the simplest viable model first — an MLP often achieves 80% of a CNN’s accuracy on semi-structured data at 10% of the implementation cost

- Measure against a non-ML baseline — can a decision tree or regression model achieve acceptable accuracy? If yes, use it.

- Use pre-trained models via transfer learning — for most business problems, fine-tuning an existing model outperforms training from scratch with 15–60× less compute

- Define your success metric before building — accuracy, recall, precision, latency, or cost per inference lead to fundamentally different architecture choices

The architecture that achieves your business metric at your budget constraint wins, regardless of which one is “most technically impressive.” For teams using AI tools to augment marketing automation or CRM workflows, API-wrapped transformers provide 90% of the value with 10% of the complexity.

The PyTorch documentation and TensorFlow/Keras guides provide the implementation starting points for all architectures covered here. For benchmarks across architectures on standard tasks, the Papers With Code leaderboards are the most current resource.

Take the Next Step

Choosing the right neural network architecture is a decision that compounds. The team that correctly matches CNN + transfer learning to an image classification problem will deploy in 6 weeks; the team that builds a transformer from scratch for the same problem will spend 6 months. GrowthGear has guided 50+ startups through exactly these decisions — helping them avoid expensive architectural mistakes and ship AI systems that actually move business metrics.

Whether you are evaluating your first computer vision system, building an NLP pipeline, or scaling a model that’s already working, the right architecture decision changes the economics of the entire project.

Book a Free Strategy Session →

Frequently Asked Questions

The main types are feedforward networks (MLP), convolutional neural networks (CNNs), recurrent neural networks (RNNs), LSTMs, transformers, GANs, autoencoders, and graph neural networks. Each suits different data types and tasks.

CNNs process grid-like data (images) using convolutional filters to detect spatial patterns. RNNs process sequential data (text, time-series) by maintaining a hidden state across timesteps. They solve fundamentally different problems.

Convolutional Neural Networks (CNNs) are the standard choice for image recognition. Vision Transformers (ViT) now match or exceed CNN accuracy at scale, but CNNs remain more efficient for edge and embedded applications.

ChatGPT uses a transformer architecture, specifically a decoder-only transformer (GPT = Generative Pre-trained Transformer). It uses self-attention mechanisms to process and generate text token by token.

Use LSTMs for low-latency edge deployments, small datasets, or streaming time-series where sequential processing is a constraint. Use transformers when you need state-of-the-art accuracy on text, have sufficient compute, and need to model long-range dependencies.

A GAN (Generative Adversarial Network) consists of two networks—a generator that creates synthetic data and a discriminator that evaluates its realism. They train against each other until the generator produces highly realistic outputs.

There are 8–12 major neural network families depending on classification, with dozens of specific architectures within each. The core families are MLPs, CNNs, RNNs/LSTMs, transformers, GANs, autoencoders, GNNs, and spiking neural networks.